Greg Bryant and Josh Gordon

Virtual Microsystems

Berkeley, Ca

Conference Proceedings of

The Eighth West Coast Computer Faire

San Francisco, Ca

March 18 - 20, 1983

Macros allow you to take a big, difficult, repetitive program and break it down into interesting, easy to work with chunks. But more important, if you're going to transport your code to a different system, macros let you reduce the system dependent part to its smallest denominator, making portability problems seemingly disappear. Reading this note, we hope, will help you understand what macros are and how to use them.

Our case is of particular interest to microcomputer users who are considering changing processors or are transporting assembly-level software between two microprocressors. There are two ways to go: 1) auto translation, where you actually convert code pound for pound so that it runs under the target machine or 2) simulation, where you write a write a program that will make believe it's another machine, and will perform instructions one at a time as given. When dealing with higher-level languages, these two ideas are called compiling and interpreting, respectively.

We will write the simulator in the assembly language of the target machine. Working in assembly language lets us concentrate on the speed of the simulator and occasionally, depending on the machine, makes the translation process easier. This of course makes some sense, since machine talk is pretty much at one level. Besides, simulation in a higher-level language is another ball of wax, where variables and subroutines take the place of the macros we're about to talk about.

A simluator has as its structure the instructions of the machine you're going to simulate. That is, the bulk of the program the simulation code itself, ils merely a bunch of labels named after instructions. When you've fetched the next line of code to be simulated, you jump to the label where you'll find the simulation of that indtruction.

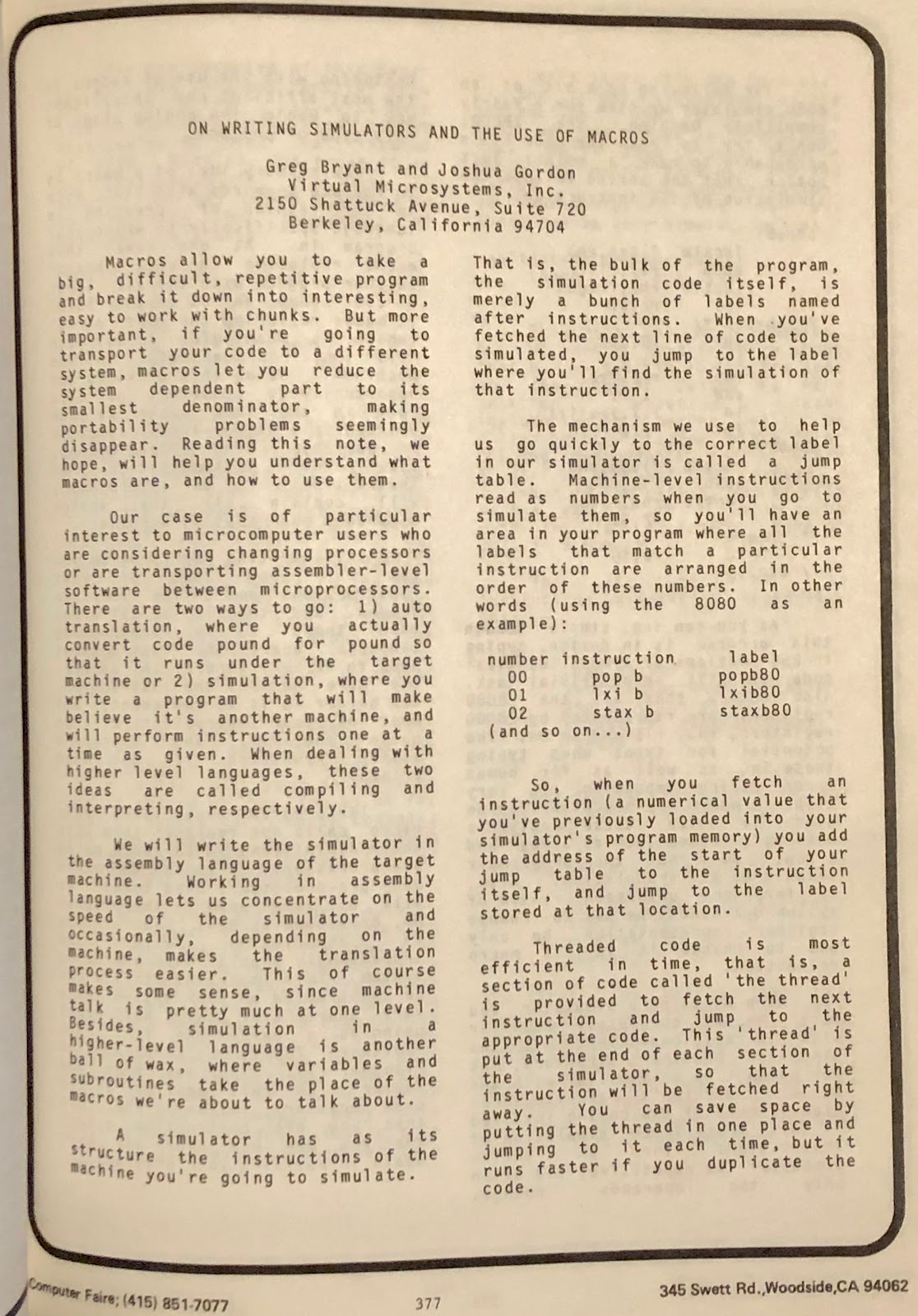

The mechanism we use to help us go quickly to the correct label in our simulator is called a jump table. Machine-level instructions read as numbers when you go to simulate them, so you'll have an area in your program where all the labels that match a particular instruction are aranged in the order of these numbers. In other words (using the 8080 as an example):

| number | instruction | label |

| 00 | pop b | popb80 |

| 01 | lxi b | lxib80 |

| 02 | stax b | staxb80 |

| (and so on) ... | | |

So, when next you fetch an instruction ( a numerical value that you've previously loaded into your simulator's program memory) you add the addresss of the start of your jump table to the instruction itself, and jump to the label stored at that location.

Threaded code is the most efficient in time, that is, a section of code called 'the thread' is provided to fetch the next instruction and jump to the appropriate code. This 'thread' is put at the end of each section of the simulator, so that the instruction will be fetched right away. You can save space by putting the thread in one place and jumping to it each time, but it runs faster if you duplicate the code.

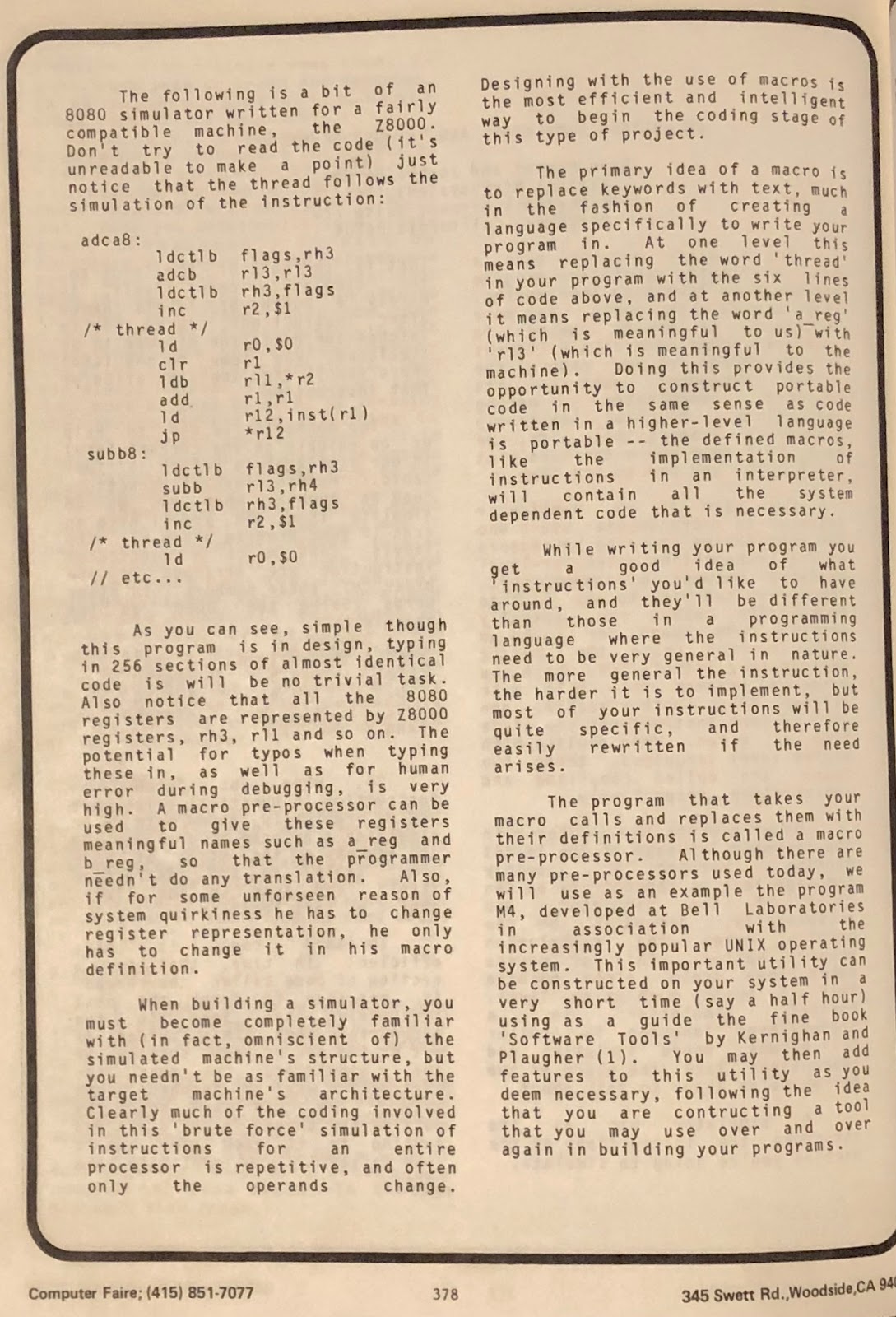

The following is a bit of an 8080 simulator written for a fairly compatible machine, the Z8000. Don't try to read the code (it's unreadable to make a point) just notice that the thread follows the simulation of the instruction:

| adca8: | | |

| ldctlb | flags,rh3 |

| adcb | rl3,rl3 |

| ldctlb | rh3,flags |

| inc | r2,$1 |

| /* thread */ | | |

| ld | r0,$0 |

| clr | r1 |

| ldb | rl1,*r2 |

| add | r1,r1 |

| ld | r12,inst(r1) |

| jp | *r12 |

| subb8: | | |

| ldctlb | flags,rh3 |

| subb | rl3,rh4 |

| ldctlb | rh3,flags |

| inc | r2,$1 |

| /* thread */ | | |

| ld | r0,$0 |

| // etc ... | | |

As you can see, simple though this program is in design, typing in 256 sections of almost identical code is no trivial task. Also notice that all the 8080 registers are represented by Z8000 registers, rh3, rl1 and so on. The potential for typos when typing these in, as well as for human error during debugging, is very high. A macro pre-processor can be used to give these registers meaningful names such as a_reg and b_reg, so that the programmer needn't do any translation. Also, if for some unseen reason of system quirkiness he has to change register representation, he only has to change it in his macro definition.

When building a simulator, you must become completely familiar with (in fact, omniscient of) the simulated machine's structure, but you needn't be as familiar with the target machine's architecture. Clearly much of the coding involved in this 'brute force' simulation of instructions for an entire processor is repetitive, and often only the operands change. Designing with the use of macros is the most efficient and intelligent way to begin the coding stage of this type of project.

The primary idea of a macro is t0o replace the keywords with text, much as in the fashion of creating a language specifically to write your program in. At one level this means replacing the word 'thread' in your program with the six lines of code above, and at another level it means replacing the word a_reg (which is meaningful to us) with 'rl3' (which is meaningful to the machine). Doing this provides the opportunity to construct portable code in the same sense as code written in a higher level language is portable -- the defined macros, like the implementations of instructions in an interpreter, will contain all the system dependent code that is necessary.

While writing your programs you get a good idea of what 'instructions' you'd like to have around, and they'll be different than those in a programming language where the instructions need to be very general in nature. The more general the instruction, the harder it is to implement, but most of your instructions will be quite specific, and therefore easily re-written if the need arises.

The program that takes your macro calls and replaces them with their definitions is called a macro pre-processor. Although there are many macro pre-processors used today, we will use as an example the program M4, developed at Bell Laboratories in asasociation with the increasingly popular UNIX operating system. This important utility can be constructed on your system

in a very short time (say a half hour) using as a guide the fine book 'Software Tools' by Kernigan and Plaugher (1). You may then add features to this utility as you deem necessary, following the idea that you are constructing a tool that you may use over and over again in the building of your programs.

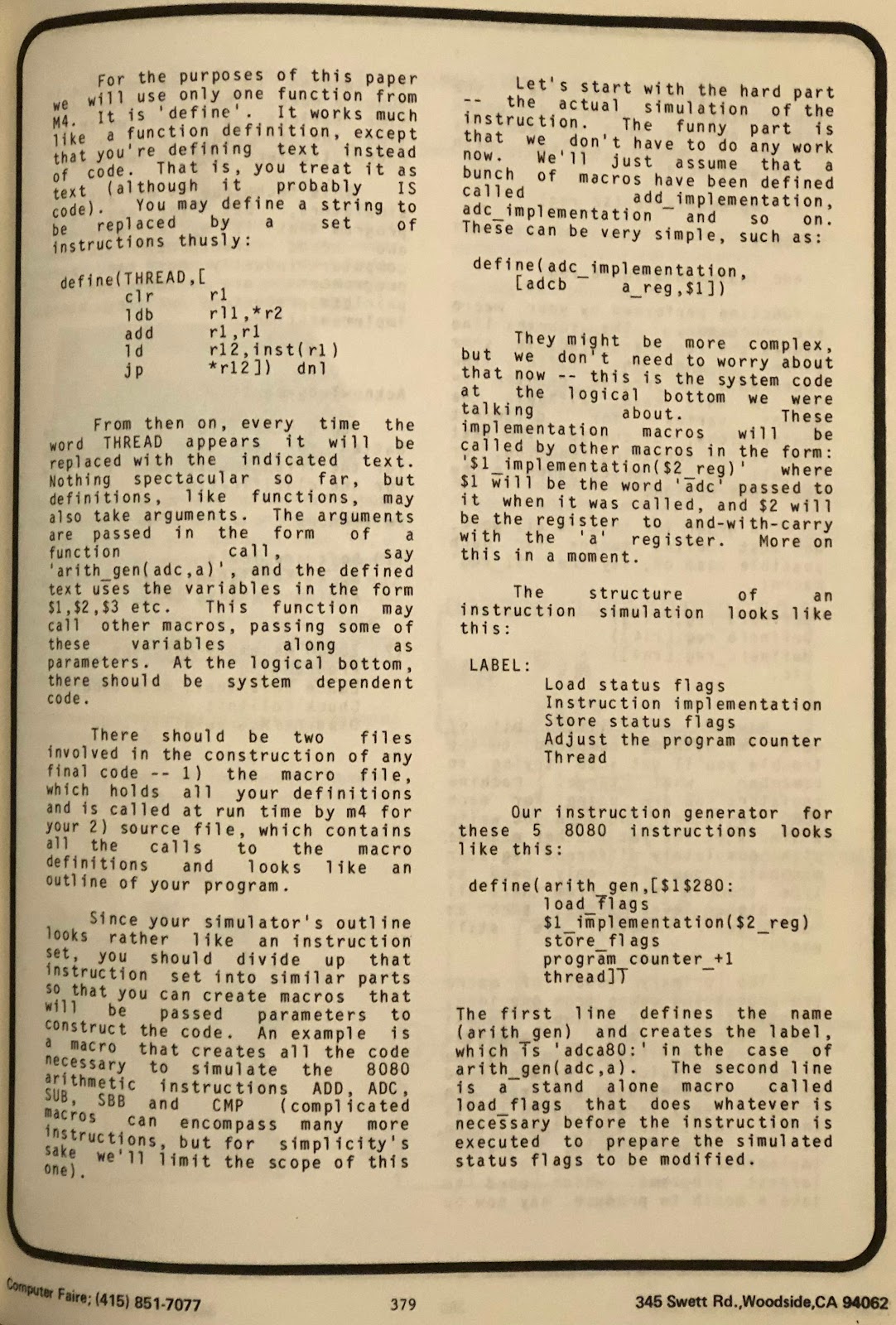

For the purposes of this paper we will use only one function from M4. It is 'define'. It works much like a function definition, instead that you're defining text instead of code. That is, you treat it as text (although it probably IS code). You may define a string to be replaced by a set of instructions thusly:

| define ( | THREAD,[ | |

| clr | r1 |

| ldb | rl1,*r2 |

| add | r1,r2 |

| ld | r12,inst(r1) |

| jp | *r12]) dnl |

From then on, every time the word THREAD appears it will be replaced by the indicated text. Nothing spectacular so far, but definitions, like functions, may also take arguments. The arguments are passed in the form of a function call, say 'arith_gen(adc,a)', and the defined text uses the variables in the form $1, $2, $3 etc. This function may call other macros, passing some of these variables along as parameters. At the logical bottom, there should be system dependant code.

There should be two files involved in the construction of any final code -- 1) the macro file, which holds all your definitions and is called by run time by m4 for your 2) source file, which contains all the calls to the macro definitions and looks like an outline of your program.

Since your simulator's outline looks rather like an instruction set, you should divide up that instruction set into similar parts so that you can create macros that will be passed parameters to construct the code. An example is a macro that creates all the code necessary to simulate the 8080 arithmetic instructions ADD, ADC, SUB, SBB and CMP (complicated macros can encompass many more instructions, but for simplicity's sake, we'll limit the scope of this one).

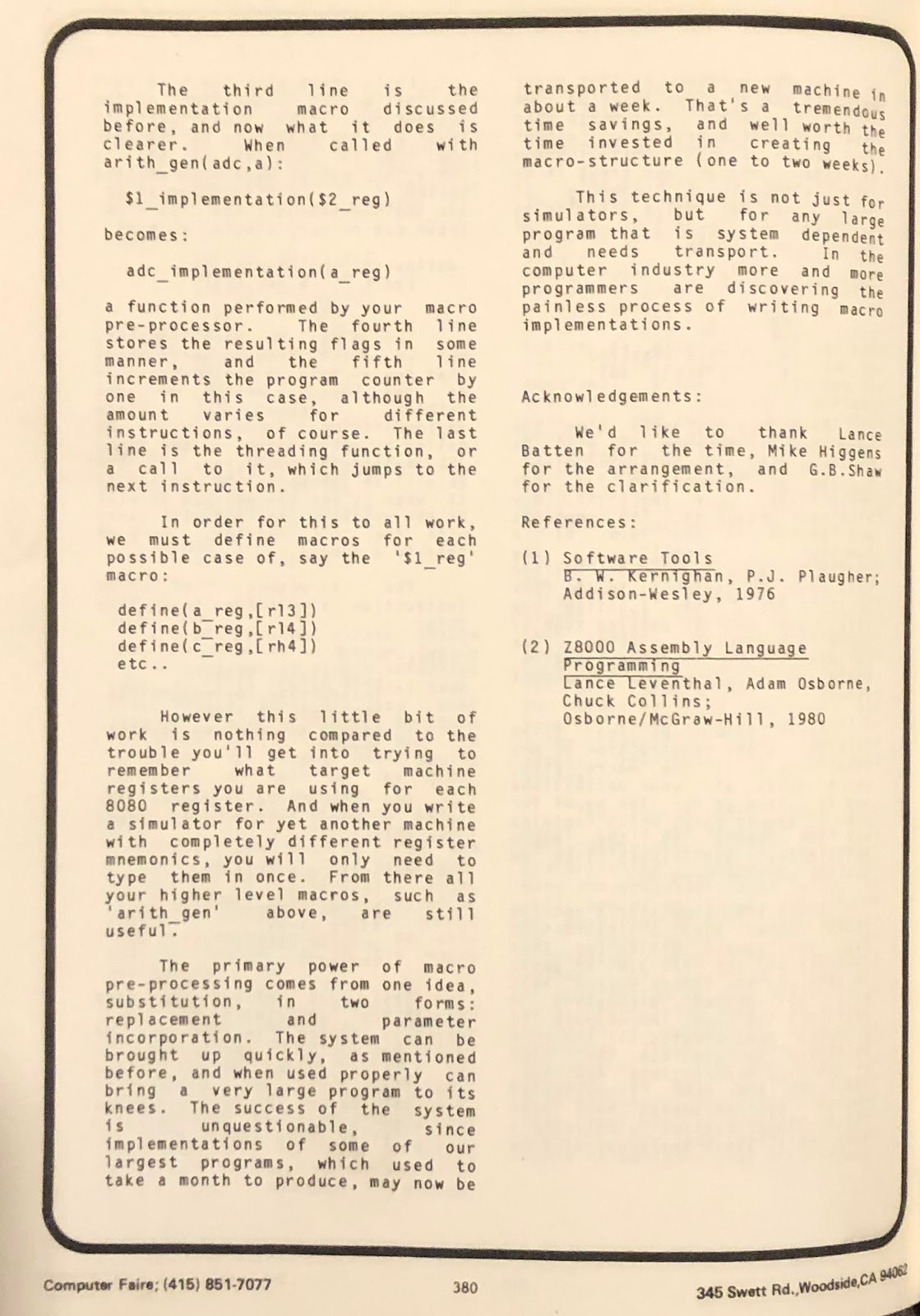

Let's start with the hard part -- the actual simulation of the instruction. The funny part is that we don't have to do any work now. We'lll just assume that a bunch of macros have been defined called add_implementation, adc_implementation and so on. These can be very simple, such as:

| define( | adc_implementation, | |

| [adcb | a_reg,$1]) |

They might be more complex, but we don't need to worry about that now -- this is the system code at the logical bottom we were talking about. These implementation macros will be called by other macros in the form: '$1_implementation($2_reg)' where $1 will be the word 'adc' passed to it when it was called, and $2 will be the register to 'and-with-carry' with

the 'a' register. More on this in a moment.

The structure of an instruction simulation looks like this:

| LABEL: | |

| Load status flags

Instruction implementation

Store status flags

Adjust the program counter

Thread |

Our instruction generator for these 5 8080 instructions looks like this:

| define( | arith_gen,[$1$280: |

| load_flags |

| $1_implementation($2_reg) |

| store_flags |

| program_counter_+1 |

| thread]) |

The first line defines the name (arith_gen) and creates the LABEL, which is 'adca80:' in the case of arith_gen(adc,a). The second line is a stand-alone macro called load_flags that does whatever is necessary, before the instruction is executed, to prepare the simulated status flags to be modified.

The third line is the implementation macro discussed before, and now what it does is clearer. When called with arith_gen(adc,a):

$1_implementation($2_reg)

becomes:

adc_implementation(a_reg)

a function performed by your macro pre-processor. The fourth line stores the resulting flags in some manner, and the fifth line increments the program counter by one in this case, although the amount varies for different instructions, of course. The last line is the threading function, or a call to it, which jumps to the next instruction.

In order for all this to work, we must define macros for each possible case. So for the '$1_reg' macro:

define(a_reg,[r13])

define(b_reg,[r14])

define(c_reg,[rh4])

etc ...

However this little bit of work is nothing compared to the trouble you'll get into trying to remember what target machine registers you are using for each 8080 register. And when you write a simulator for yet another machine with completely different register mnemonics, you will only need to type them in once. From there, all your higher level macros, such as 'arith_gen' above, are still useful.

The primary power of macro pre-processing comes from one idea, substitution, in two forms: replacement and parameter incorporation. The system can be brought up quickly, as mentioned before, and when used properly can bring a very large program to its knees. The success of the system is unquestionable, since implementations of some of our largest programs, which used to take a month to produce, may now be transported to a new machine in about a week. That's a tremendous time savings, and well worth the time invested in creating the macro structure (one to two weeks).

This technique is not just for simulators, but for any large program that is system dependant and needs transport. In the computer industry more and more programmers are discovering the painless process of writing macro implementations.

Acknowledgements: We'd like to thanks Lance Batten for the time, Mike Higgens for the arrangement, and G.B. Shaw for the clarification.

References:

(1)

Software Tools, B.W. Kernighan, P.J. Plaugher; Addison-Wesley, 1976

(2)

Z8000 Assembly language Programming, Lance Leventhal, Adam Osborne, Chuck Collins; Osborne/McGraw-Hill, 1980